2018 Workshop on Interpretable & Reasonable Deep Learning and

its Applications (IReDLiA)

Stockholm, Sweden, 13 July 2018 (Room: K2, Time: 1500-1730)

Overview:

Learning and reasoning are two fundamental intelligence abilities. While machine learning has witnessed record-breaking successes in the last decade with the rapidly developed deep learning techniques, neural network models are nevertheless perceived as black-boxes. There has been a compelling need to interpret and visualize the learned representations and decisions made by NN models, especially for sensitive applications such as medical diagnosis or autonomous driving in which rare mistakes can be costly or fatal. Moreover, the ability to assemble trainable networks and thus combine previously acquired knowledge plays an extremely import role in constructing reasoning systems.

Topics:

- Visualizing and distillation of deep learning models

- Analysis and comparison of methods to interpret & visualize deep learning models

- Machine reasoning systems via first-order logic, fuzzy logic, probabilistic reasoning, causal reasoning, spatial reasoning and social reasoning

- Industrial applications in medical diagnosis, autonomous driving, etc.

- Safe AI and AI ethics

(NEW) SCHEDULE:

- 1500-1510 : Welcome Remark and Opening

- 1510-1535 : Golden Ratio: A Facial Attractive Attribute Learned by CNN

- 1535-1600 : VectorDefense: Vectorization as a Defense to Adversarial Examples

- 1600-1625 : Learning Qualitatively Diverse and Interpretable Rules for Classification

- 1625-1650 : Fuzzy Inference on Time-varying Universes

- 1650-1715 : On the Convergence of Deep Unrolling: From Learnable Optimization to Interpretable Deep Networks

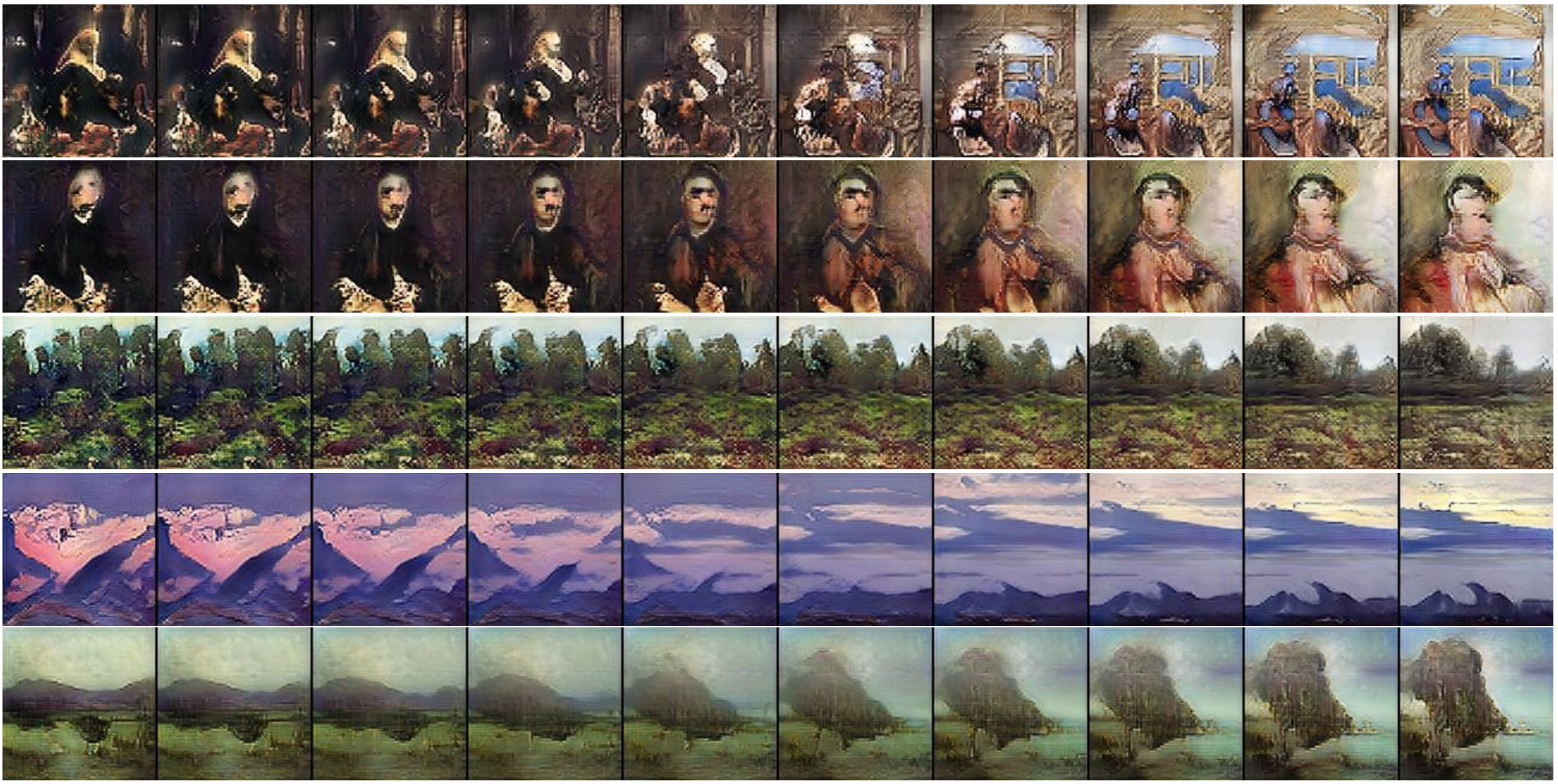

- 1715-1740 : On Visual Hallmarks of Robustness to Adversarial Malware

Important Dates:

Submission Deadline: 01 May 2018Notifications: 27 May 2018Camera Ready: 19 June 2018- Workshop Date: 13 July 2018 (Room: K2, Time: 1500-1730)

Venue and Registration:

The workshop will take place at Stockholmsmässan, Stockholm, Sweden. Please consult the main IJCAI-ECAI website for details on registration.

Nokia Technologies

lixin.fan at nokia.com

University of Malaya, Malaysia

cs.chan at um.edu.my

Chinese Academy of Sciences, PR China

feiyue.wang at ia.ac.cn

Xidian University, PR China

xliang at xidian.edu.cn